Teams and stakeholders need to track how and when teams deliver value. How many features and operational capabilities have been delivered? Understand that information by measuring actual Working Tested Features (WTFs) shipped, not low-quality proxies such as story points or date estimates.

✓ Critical ❑ Helpful ❑ Experimental

First steps to create this habit:

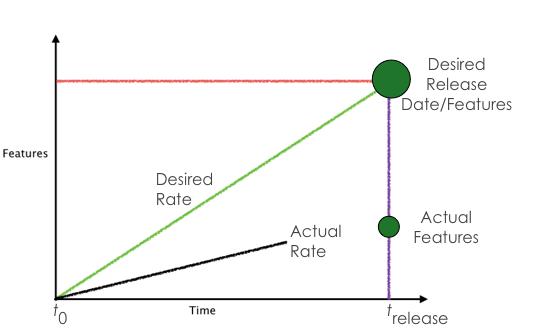

This habit creates good sense of actual work completed, using a simple graph form. Time goes along the X axis, and all the work that’s ready to ship on the Y axis. We call this a burnup chart.

Time on the X axis should be in days, but if you are just starting out and only delivering on several-week iterations, then use iterations.

Measuring a team’s actual delivery provides transparency into the development team’s value delivered. It’s an important aspect of keeping people informed of work being done.

By keeping burnup charts current with work completed, anyone can see what items have been completed. This visibility provides status updates answering questions about current work allowing teams to avoid interruptions. It also provides a self-serve capability for stakeholders to get status information.

This works best when the “features” or bits of completed work are roughly the same size (which they should be, otherwise the team isn’t breaking work down into manageable pieces effectively). Also, although we call them “features” here for simplicity, the chart should include any and all work the team is doing.

The speed at which a given team delivers features is a constant. It will not suddenly get greater due to adding team members or adding overtime. With consistent feature sizes, you can easily extrapolate the number of features the team can deliver at any future time by drawing a straight line.

Important: Notice that a burnup is different from a traditional “burndown” chart. A burndown chart ignores additions over time. You could be chasing an ever-changing target. With a burnup chart, you’ll can more easily track changes to the total work required as feedback and learning increase over the life of the project.

Create a baseline by monitoring your team’s burnup charts over several months. As you learn what they’re capable of delivering, you can use that information to provide more accurate or realistic releases.

You may want to experiment with different data elements or graphs to determine which method best represents value for your organization. This is okay as long as you stick to actuals and avoid proxies to the extent possible. A proxy may help provide some anecdotal information but should never be used as a substitute for actuals when that information is available, even if using a proxy seems easier, it’s always only going to be an approximation.

←Prev (Work in Small, Stable Teams)(Lead the Way) Next→

Follow @growsmethod in the Fediverse, or subscribe to our mailing list:

Sign up for more information on how you can participate and use the GROWS Method®. We will not use your email for any other purpose.