Answers From Experiments

Experiments allow us to test decisions on technology, architecture, and design alternatives. They can provide both qualitative and quantitative answers to proposed changes without betting your company’s future.

Pain Points

- No one ever tries to introduce new or better tools, designs, or practices

- New approaches don’t work out; new practices don’t create new habits

- You’re not sure what advice to take from different sources

- You’ve tried the latest and greatest technology stack, tool, or design approach and it failed to deliver results

- You’ve “adopted agile” or some other approach and it failed to deliver results

Benefits

- Lower risks and costs

- Higher quality and more immediate learning

- Easier acceptance and buy-in

- Local adaptation: tweak and tune to local context

Rather than relying on what worked elsewhere with different people, you can inspect and adapt in the proper context: yours.

Assessment

- Experiments are performed regularly. All experiments provide data for the next experiment (Major Boost)

- Experiments are occasionally performed for tech questions only (Boost)

- Experiments are occasionally performed for process adoption only (Boost)

- Experiment results are ignored (Setback)

- Experiments that do not produce the expected results lead to blame, arguments, retaliation (Significant Setback)

- Experiments are not performed at all (Disaster)

- Company wide shift to new tools, methods and processes with no experiments (Disaster)

Application

✓ Critical ❑ Helpful ❑ Experimental

Adoption Experiment

Do the following steps to get started.

Setup

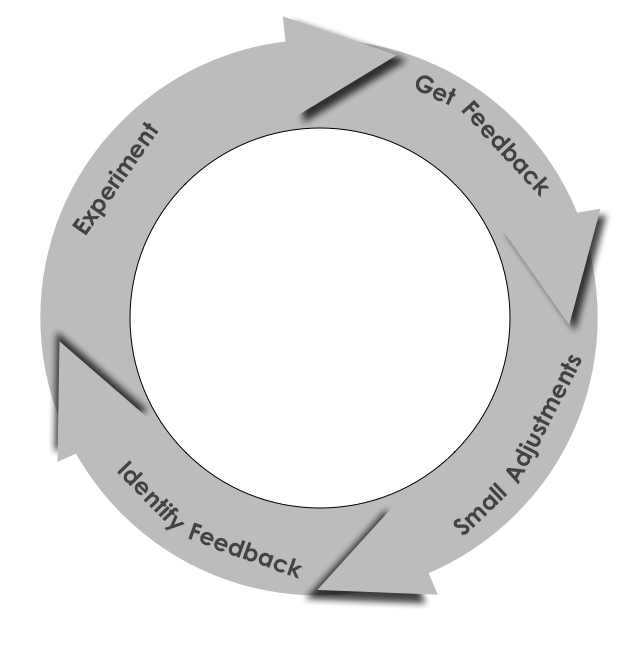

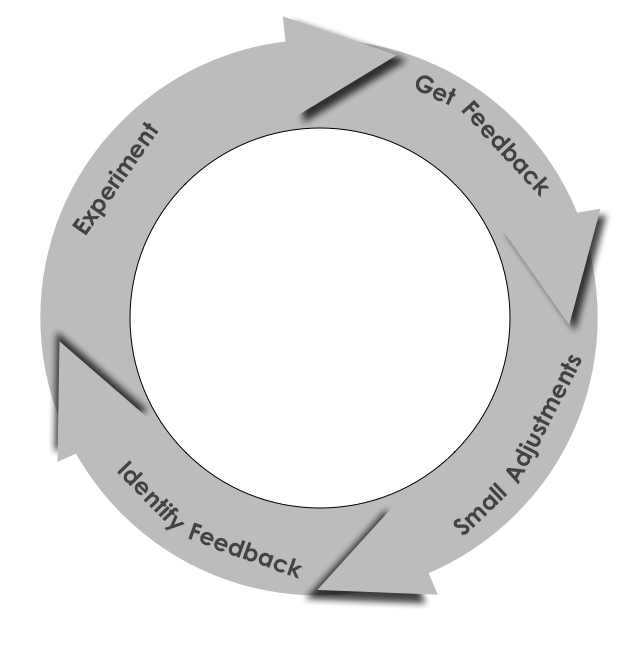

Use this pattern for designing experiments

- Identify Feedback

- Document the experiment

- Conduct the experiment

- Based on the results, decide what adjustments to make prior to the next experiment

Trial

- Design a FINE experiment:

- Fast feedback

- Inexpensive

- No permission needed

- Easy

- In addition to feedback you hope to see, list feedback that will tell you the experiment isn’t working. This helps prevent outcome and confirmation bias

- Document the experiment. At a minimum WriteItDown

- Make sure the participants AgreeToTry

- Decide how you will know when to end the experiment.

- Go!

Evaluate Feedback

- Be open to what the results reveal. Don’t be alarmed by unexpected results, but use that as the seed for new experiments. If your experiments always succeed, are you really testing the system and critical thinking?

- If you get the expected feedback, what did you learn about your system and critical thinking?

- Based on the feedback think about related experiments you might conduct at the same time

- If practical, have someone else replicate your experiment to validate your findings

What Does it Look Like?

Big changes can evoke big fear. But small changes—experiments—aren’t an existential threat. Experiments allow you to learn, to manage risk, and to change. There are some caveats:

- If you know what answer you want, don’t conduct an experiment to “learn the answer.” The participants will see through the charade. Use AgreeToTry to get quick feedback on what participants think. This also engages others in the experiment.

- Company wide digital transformation? It will look good on a resume, burn a ton of cash, and will change nothing if you can’t break it down into small manageable changes that collectively meet a larger goal. And it’s not an experiment. Use SmallBitesAlways to control the scope. This prevents betting the bank on an idea with no supporting data.

In God we trust. Everyone else brings data. —W. Edwards Deming

Experiments can provide data about process improvements and insight into options. If the experiment aims at process improvement, know what you can change. For example, you cannot simply remove two weeks from cycle time directly. But what factors influence cycle time? Which of these can you change? When you do change, what happens to cycle time?

When using experiments to evaluate options, try to have 3 or more options. This helps prevent binary, either/or situations, and expands the solution domain.

Have a hypothesis for the experiment, otherwise you’re wasting resources. Determine in advance what data you will use to confirm the experiment results. Specify both confirming and dis-confirming data. This helps prevent confirmation bias. Know what your current status so you can detect changes that result from the experiment.

Keep the Experiment FINE:

- Fast feedback. The longer an experiment runs, the more expensive it becomes, the more complicated, and generally the more permission needed. Most experiments in software development won’t have an absolute answer. What is the earliest you can get a GEFN (Good Enough For Now) answer. Can you find leading indicators that suggest how the result will turn out?

- Inexpensive. The more money you need for an experiment, the more likely you’re betting the bank on the results. This means you’re probably not running an experiment, you’re gathering data to justify a decision someone’s already made

- No permission needed. Needing permission indicates an organizational choke hold on innovation and creativity. The more people who need to approve an experiment, the more likely conflicting agendas and desired results will creep into the experiment design

- Easy. Most people have too much work stacked up (think WIP). Adding more work with experiments adds burden. Don’t create a separate “experiment” group or team. This removes buy-in from people who will implement the results (assuming a successful outcome) and knowledge of how the current work and experiment interact.

Don’t ignore the feedback if it’s not what you expect. No experiment fails; all experiments provide data. It’s very possible to get unwanted feedback. This just means you need to go in a different direction than you’d originally planned.

And if all your experiments succeed, you’re not innovating. You might be improving efficiency, perhaps, if you’re lucky. But you can do better.

Software development is the single most difficult undertaking we attempt. The work itself is non-compressible, brain intensive and prone to errors of understanding and construction. Use experiments to learn what might improve things for you, in your context without ignoring or betting your companies future.

An Example

Suppose your organization has a document server to house important and operational documents, and you decide to build the document management system internally. You’ve used a traditional DBMS for this app. Over time, you’ve noticed performance degrade as the scale of the solution grew. You want to know if you can optimize your current solution or if you’ll need to move to a different technology.

Let’s set up the experiment using FINE:

- Review DB performance with DBA/DBEs and optimize as needed

- Fine tune the queries for performance

- If the fine tuning of queries for performance improvements does not shave off an average of 3 seconds, download and pilot a test with a document or column oriented database and compare results

Fast feedback - 3 opportunities for fast feedback from dbas, fine tuning and a document oriented database

- Inexpensive - All of the experiment components require only a small amount of labor

- No permission needed - With no need for additional funding and this being relevant to the work at hand, most organizations would not require teams to get permission to conduct this experiment

- Easy- These are steps any technology team could/would most likely have the skills in house to perform these tasks

Warning Signs

- Experiments last six months. They should be no more than a few weeks at the very most

- Experiments require a large investment in expensive tools.

- Experiments become a rubber-stamp approval

- You experiment with less than three alternatives when there’s a question

- An experiment impacts production code

- The decision at the conclusion of the experiment is irreversible.

- Feedback indicates this is working well, and you don’t create the habit.

- Feedback indicates this is not going to help, and you adopt it anyway.

How To Fail Spectacularly

- Ignoring unexpected data

- Not conducting experiments

- Not keeping track of experiments and results

- Not sharing experiments and results with other related teams/departments

←Prev (Small Bites Always)(Three Track Attack) Next→